Disclaimer: This post is a long one. If I ever have to write a book about programming, this would be the topic I’d pick. Trust me: if only tech universities taught more about this, a lot more people would have been able to sleep during the entire night, have hot meals and enjoy reunions with family and friends. If you choose to continue reading, and apply the practices described in here, you’ll be glad you did.

Background

Fault-tolerant systems are one of the keys for succeeding in today’s enterprise software. Let me tell you a story.

On the past, around the 80s, companies could afford the luxury of delivering “a solution” to a problem, without having to make it “the best solution ever”. Computing was so new, that customers [that could actually afford computer goods] somehow could condone some failures. Technology was so expensive that people would prefer to commit themselves to pay for a system and deal with it until it was completely worn out. Also, there wasn’t much interoperability/compatibility with other systems, meaning systems wouldn’t talk each other unless coded by the same company. At last, not many companies could get into the technology business due lack of the-know-how and the expensive and risky investment this represented.

A side effect of these combined factors was that engineers didn’t have to worry too much about non-critical failures; not that they didn’t care, is just that they could rest assured that their customers would have to await for them to get the issues fixed, since their customers couldn’t afford switching to another system from the competition and jeopardize all their data and investment; after all, there wasn’t much competition anyway unless someone had a lot of bucks to start a new company with enough chances to just come and snatch an existent business. In few words: Once you paid for a system, you were basically locked in.

Fast-forward several decades. Nowadays many of those factors changed: Technology is much cheaper, there are a lot of compatibility standards and protocols, and most people have access to computer goods. A side effect of these factors is: Consumers demand permanent solutions in a very short amount of time (even unrealistic sometimes); if they don’t get their demands satisfied fast-enough and with the expected quality, they simply switch to the competition knowing there will be a way to migrate all their current data into a new system (a lot of companies invest in the “buy my product and I’ll migrate all your data for you” business). Consumers have become, thus, very demanding and impatient to failures, even subtle ones.

Consumers have become, thus, very demanding and impatient to failures, even subtle ones.

As consequence, the technology is very fast-paced today. New companies emerge out of nothing, and everyone has a chance to get a slice of the pie in this market without requiring to invest much. Moreover, there are also a lot of open-source projects that offer excellent solutions out-of-the-box at a lower or no-cost.

This can only mean one thing: Companies have little to no-room for failure. Any small annoyance could literally become into bad press and a reason for bankruptcy. Companies are now pushed hard to succeed at first or die. Losing customers is very easy. Every time a company offers a similar product than yours, snatching some of your customers, it means you are clearly missing something that is preventing your customer-base to grow and remain loyal. Check on what happened to Nokia, Yahoo, RIM, Kodak, etc.

All this story leads to one conclusion: you cannot risk failing. And, when you do, you must recover very fast.

How all this affect you as a software engineer?

For a start, in everything.

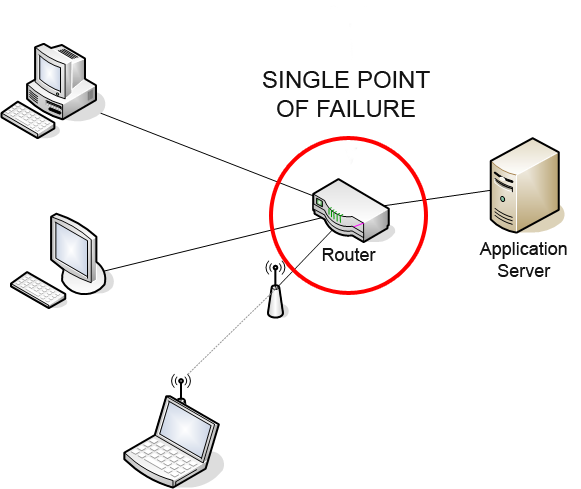

All systems are subject to fail for a wide range of factors:

- From small network glitches to full data centers blackouts.

- From curious users that “clicked that button”, to proactive developers that “found a way to call your APIs indiscriminately”.

- From annoying bugs introduced by your interns, to “artistic” bugs created by your architects (hidden deep down in the core of the system).

But before we get our hands dirty, let’s review the terminology of our discussion matter so we can focus accurately on the solutions:

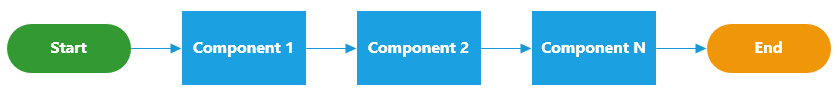

- System. Conjunction of two of more components that act harmoniously together.

- Systems are not just mere ‘apps’ or ‘web-pages’. They are complex living entities with heavy intercommunication and integration among all their components. Systems are large by nature and require multiple individuals and teams to work together to meet the release date with the desired features.

- Fault. Abnormal or unsatisfactory behavior.

- Tolerant. Immune, resilient, resistant. Keep going despite difficulties.

“Fault-avoidance is preferable to fault-tolerance in systems design” – The Art Of Systems Architecting (Maier and Rechtin)

We will not cover fault-tolerant nor self-healing algorithms; we will focusing only on best practices to make a system work as a whole.

Faults we want to avoid

The main key faults we want to prevent are:

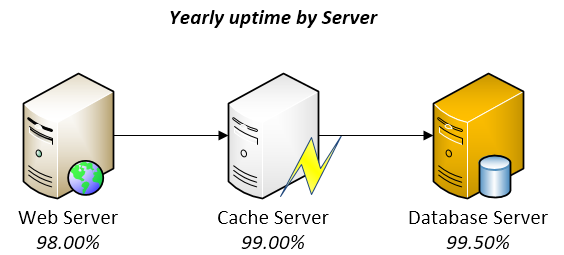

- Loss-of-service, loss-of-data or misleading-results due SW failure or HW-corruption.

- Impossibility to recover from generalized-failure.

- Slow support responses or long-recovery times.

Vital features of the product have to be carefully designed so that they remain up-and-running longer than anything else does. For example, if the servers of a cloud-drive are presenting failures, the system must attempt using all its available resources so that users can still upload/download files and run virus scans, even if that means other features, such as thumbnail generation or email notifications, have to be completely turned off (think of a QoS analogy within SW boundaries).

Vital features of the product have to be carefully designed so that they remain up-and-running longer than anything else does.

There are, of course, many other advantages on designing systems with fault-tolerance (such as gains in performance and scalability in some cases) but, at the end of the day, they will all fall into one of the three categories listed above which all basically sum-up to “keep customers happy” and “be cost-effective”.

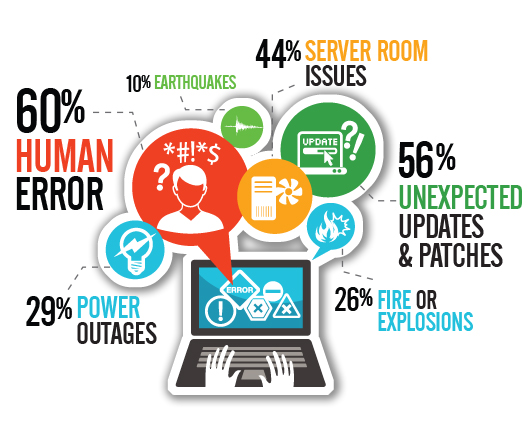

What causes the failures in systems?

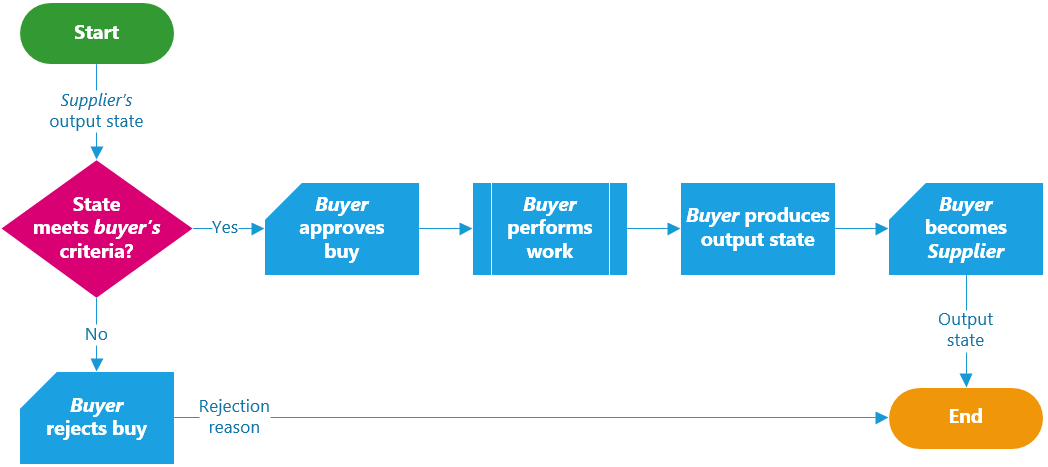

In general, the failures show up after one or more faults happen. Some failures remain latent until a certain fault scenario is met.

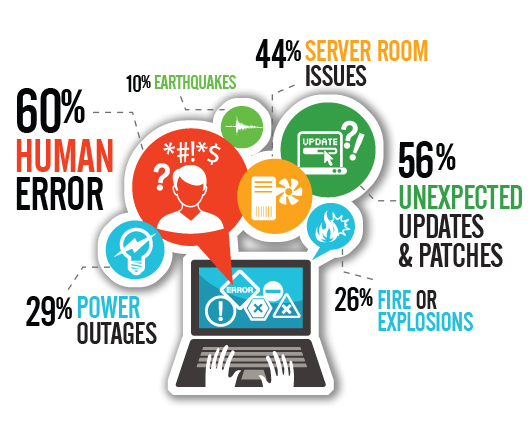

Faults can be introduced/caused by several factors, such as human mistakes, direct attacks or pure bad luck. Human mistakes account for the major cause: systems are created by people and consume libraries created by people, they are tested by people, later deployed by people on HW designed by people, running on … you get the idea!

Once a fault starts, it creates more and more faults in-cascade until one or more failures occur. For example, a memory leak might eventually consume all physically memory, which might make the system to use virtual memory, which might make all threads to process slower, which might exhaust the thread pool because threads are not being returned back on time, which might cause the threads to get blocked and requests to get piled in the request-queue, which might cause deadlocks, which might cause crashes (if the memory-abuse itself did not cause the crash before).

It is mostly unpredictable knowing when faults will happen or what will trigger them, but you can design your system to prevent most-common causes of faults-introduction and to recover quicker from them.

Faults decrease by doing the right thing

Since most faults are introduced by human mistakes, it makes sense to perform the next two actions:

- Prevent situations that lead towards sloppy, distracted, chaotic or careless development. This is mostly a human-based aspect.

- Design the systems to detect, circumvent or recover from fault-states that could not be prevented by other means. This is mostly a technical-based aspect.

I have found that most-critical SW failures are commonly caused, either directly or indirectly, by companies’ organizational issues.

And it cannot be otherwise! Systems require a lot of people and teams working concurrently on each of the SW and HW pieces, which demands outstanding cross-communication and collaboration to allow producing as much as possible without stepping each other’s feet, without breaking the build and without incurring into maintainability issues.

Designing systems with the right balance between budget, features-scope, code-quality and total effort is a choice the organization makes.

The way teams are managed seriously affects, positively or negatively, the quality of the final product.

Regarding technical matters, there are several best-practices and well-proven patterns the tech staff can apply to avoid faults, or to recover from them quicker. By using those techniques, the team can focus more time on the essence of SW, i.e. features that make their product unique.

Let’s review what can be done at organizational and technical levels on the next parts.

A Disaster-Recovery plan is a set of artifacts, e.g. documents, scripts, servers, contact names, tape backups, that will be used to restore services after a wide range of catastrophic events such as “all servers got corrupted” or “a

A Disaster-Recovery plan is a set of artifacts, e.g. documents, scripts, servers, contact names, tape backups, that will be used to restore services after a wide range of catastrophic events such as “all servers got corrupted” or “a

You must be logged in to post a comment.